It seems self evident that companies should try to satisfy their customers. Satisfied customers usually return and buy more, they tell other people about their experiences, and they may well pay a premium for the privilege of doing business with a supplier they trust. Statistics are bandied around that suggest that the cost of keeping a customer is only one tenth of winning a new one. Therefore, when we win a customer, we should hang on to them. Conducting a customer satisfaction research survey is a good way to start measuring where you stand in terms of customer loyalty.

Why is it that we can think of more examples of companies failing to satisfy us rather than when we have been satisfied? There could be a number of reasons for this. When we buy a product or service, we expect it to be right. We don’t jump up and down with glee saying “isn’t it wonderful, it actually worked”. That is what we paid our money for. Add to this our world of ever exacting standards. We now have products available to us that would astound our great grandparents and yet we quickly become used to them. The bar is getting higher and higher. At the same time our lives are ever more complicated with higher stress levels. Delighting customers and achieving high customer satisfaction scores in this environment is ever more difficult. And even if your customers are completely satisfied with your product or service, significant chunks of them could leave you and start doing business with your competition.

A market trader has a continuous finger on the pulse of customer satisfaction. Direct contact with customers indicates what he is doing right or where he is going wrong. Such informal feedback is valuable in any company but hard to formalize and control in anything much larger than a corner shop. For this reason customer surveys are necessary to measure and track customer satisfaction.

Developing a customer satisfaction research program is not just about carrying out a customer service survey. Surveys provide the reading that shows where attention is required but in many respects, this is the easy part. Very often, major long lasting improvements need a fundamental transformation in the company, probably involving training of the staff, possibly involving cultural change. Customer satisfaction research is critical in identifying these areas for improvement. The result should be financially beneficial with less customer churn, higher market shares, premium prices, stronger brands and reputation, and happier staff. However, there is a price to pay for these improvements. Costs will be incurred in the customer satisfaction research survey. Time will be spent working out an action plan. Training may well be required to improve the customer service. The implications of customer satisfaction studies go far beyond the survey itself and will only be successful if fully supported by the echelons of senior management.

There are six parts to any customer satisfaction research program:

Some products and services are chosen and consumed by individuals with little influence from others. The choice of a brand of cigarettes is very personal and it is clear who should be interviewed to find out satisfaction with those cigarettes. But who should we interview to determine the satisfaction with breakfast cereal? Is it the person that buys the cereal (usually a parent) or the person that consumes it (often a child)? And what of a complicated buying decision in a business to business situation. Who should be interviewed in a customer satisfaction research survey for a truck manufacturer – the driver, the transport manager, the general management of the company? In other b2b markets there may well be influences on the buying decision from engineering, production, purchasing, quality assurance, plus research and development. Because each department evaluates suppliers differently, the customer satisfaction survey will need to cover the multiple views.

Further Reading Customer Experience: Why We All Have A Role To Play:

The adage in market research that we turn to again and again is the need to ask the right question of the right person. Finding that person in customer loyalty research may require a compromise with a focus on one person – the key decision maker; perhaps the transport manager in the example of the trucks. If money and time permit, different people could be interviewed and this may involve different interviewing methods and different questions.

The traditional first in line customer is an obvious candidate for measuring customer satisfaction. But what about other people in the channel to market? If the products are sold through intermediaries, we are even further from our customers. A good customer satisfaction program will include at least the most important of these types of channel customers, perhaps the wholesalers as well as the final consumers.

One of the greatest headaches in the planning of a b2b customer satisfaction survey is the compilation of the sample frame – the list from which the sample of respondents is selected. Building an accurate, up-to-date list of customers, with telephone numbers and contact details is nearly always a challenge. The list held by the accounts department may not have the contact details of the people making the purchasing decision. Large businesses may have regionally autonomous units and there may be some fiefdom that says it doesn’t want its customers pestered by market researchers. The sales teams’ Christmas card lists may well be the best lists of all but they are kept close to the chest of each sales person and not held on a central server. Building a good sample frame nearly always takes longer than was planned but it is the foundation of a good customer satisfaction project.

Customer satisfaction surveys are often just that – surveys of customers without consideration of the views of lost or potential customers. Lapsed customers may have stories to tell about service issues while potential customers are a good source of benchmark data on the competition. If a customer survey is to embrace non-customers, the compilation of the sample frame is even more difficult. The quality of these sample frames influences the results more than any other factor since they are usually outside the researchers’ control. The questionnaire design (further reading: The 7 Steps of Questionnaire Design) and interpretation are within the control of the researchers and these are subjects where they will have considerable experience.

In customer satisfaction research we seek the views of respondents on a variety of issues that will show how the company is performing and how it can improve. This understanding is obtained at a high level (“how satisfied are you with ABC Ltd overall?”) and at a very specific level (“how satisfied are you with the clarity of invoices?”).

High level issues are included in most customer satisfaction surveys and they could be captured by questions such as:

It is at the more specific level of questioning that things become more difficult. Some issues are of obvious importance and every supplier is expected to perform to a minimum acceptable level on them. These are the hygiene factors. If a company fails on any of these issues they would quickly lose market share or go out of business. An airline must offer safety but the level of in-flight service is a variable. These variables such as in-flight service are often the issues that differentiate companies and create the satisfaction or dissatisfaction.

Working out what questions to ask at a detailed level means seeing the world from the customers’ points of view. What do they consider important? These factors or attributes will differ from company to company and there could be a long list. They could include the following:

The list is not exhaustive by any means. There is no mention above of environmental issues, sales literature, frequency of representatives’ calls or packaging. Even though the attributes are deemed specific, it is not entirely clear what is meant by “product quality” or “ease of doing business”. Cryptic labels that summarize specific issues have to be carefully chosen for otherwise it will be impossible to interpret the results.

Customer facing staff in the research-sponsoring business will be able to help at the early stage of working out which attributes to measure. They understand the issues, they know the terminology and they will welcome being consulted. Internal focus groups with the sales staff will prove highly instructive. This internally generated information may be biased, but it will raise most of the general customer issues and is readily available at little cost.

Further Reading Six Steps To B2B Customer Experience Excellence:

It is wise to cross check the internal views with a small number of depth interviews with customers. Half a dozen may be all that is required.

When all this work has been completed a list of attributes can be selected for rating.

There are some obvious indicators of customer satisfaction beyond survey data. Sales volumes are a great acid test but they can rise and fall for reasons other than customer satisfaction. Customer complaints say something but they may reflect the views of a vociferous few. Unsolicited letters of thanks; anecdotal feedback via the salesforce are other indicators. These are all worthwhile indicators of customer satisfaction but on their own they are not enough. They are too haphazard and provide cameos of understanding rather than the big picture. Depth interviews and focus groups could prove very useful insights into customer satisfaction and be yet another barometer of performance. However, they do not provide benchmark data. They do not allow the comparison of one issue with another or the tracking of changes over time. For this, a quantitative survey is required.

The tool kit for measuring customer satisfaction boils down to three options, each with their advantages and disadvantages. The tools are not mutually exclusive and a self-completion element could be used in a face to face interview. So too a postal questionnaire could be preceded by a telephone interview that is used to collect data and seek co-operation for the self-completion element.

When planning the fieldwork, there is likely to be a debate as to whether the interview should be carried out without disclosing the identify of the sponsor. If the questions in the survey are about a particular company or product, it is obvious that the identity has to be disclosed. When the survey is carried out by phone or face to face, co-operation is helped if an advance letter is sent out explaining the purpose of the research. Logistically this may not be possible in which case the explanation for the survey would be built into the introductory script of the interviewer.

If the survey covers a number of competing brands, disclosure of the research sponsor will bias the response. If the interview is carried out anonymously, without disclosing the sponsor, bias will result through a considerably reduced strike rate or guarded responses. The interviewer, explaining at the outset of the interview that the sponsor will be disclosed at the end of the interview, usually overcomes this.

Customers express their satisfaction in many ways. When they are satisfied, they mostly say nothing but return again and again to buy or use more. When asked how they feel about a company or its products in open-ended questioning they respond with anecdotes and may use terminology such as delighted, extremely satisfied, very dissatisfied etc. Collecting the motleys variety of adjectives together from open ended responses would be problematical in a large survey. To overcome this problem market researchers ask people to describe a company using verbal or numeric scales with words that measure attitudes.

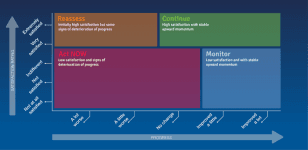

Further Reading The Momentum Matrix – A Customer Experience Framework:

People are used to the concept of rating things with numerical scores and these can work well in surveys. Once the respondent has been given the anchors of the scale, they can readily give a number to express their level of satisfaction. Typically, scales of 5, 7 or 10 are used where the lowest figure indicates extreme dissatisfaction and the highest shows extreme satisfaction. The stem of the scale is usually quite short since a scale of up to 100 would prove too demanding for rating the dozens of specific issues that are often on the questionnaire.

Measuring satisfaction is only half the story. It is also necessary to determine customers’ expectations or the importance they attach to the different attributes, otherwise resources could be spent raising satisfaction levels of things that do not matter. The measurement of expectations or importance is more difficult than the measurement of satisfaction. Many people do not know or cannot admit, even to themselves, what is important. Can I believe someone who says they bought a Porsche for its “engineering excellence”? Consumers do not spend their time rationalizing why they do things, their views change and they may not be able to easily communicate or admit to the complex issues in the buying argument.

The same interval scales of words or numbers are often used to measure importance – 5, 7 or 10 being very important and 1 being not at all important. However, most of the issues being researched are of some importance for otherwise they would not be considered in the study. As a result, the mean scores on importance may show little differentiation between the vital issues such as product quality, price and delivery and the nice to have factors such as knowledgeable representatives and long opening hours. Ranking can indicate the importance of a small list of up to six or seven factors but respondents struggle to place things in rank order once the first four or five are out of the way. It would not work for determining the importance of 30 attributes.

As a check against factors that are given a “stated importance” score, researchers can statistically calculate (or “derive”) the importance of the same issues. Derived importance is calculated by correlating the satisfaction levels of each attribute with the overall level of satisfaction. Where there is a high link or correlation with an attribute, it can be inferred that the attribute is driving customer satisfaction. Deriving the importance of attributes can show the greater influence of softer issues such as the friendliness of the staff or the power of the brand – things that people somehow cannot rationalize or admit to in a “stated” answer.

In a 2021 survey of marketing, insight, CX and business strategy decision-makers at B2B brands, capturing customer satisfaction ratings is the most common method organizations are using to measure customer loyalty.

Overall, 68% of organizations surveyed captured customer satisfaction ratings, while other key metrics such as customer retention, NPS and customer lifetime value were captured less frequently (60%, 51% and 31% respectively).

What’s important to note here is that CX Leaders (companies who are strong on at least 5 of B2B International’s 6 CX excellence indicators) are far more likely to capture customer satisfaction ratings compared to their average (87% vs 70%) or low performing counterparts (87% vs 54%).

This highlights the importance of measuring customer satisfaction if a brand wants to deliver a leading customer experience.